I reframed photos using Gemini

Saturday 3rd January 2026

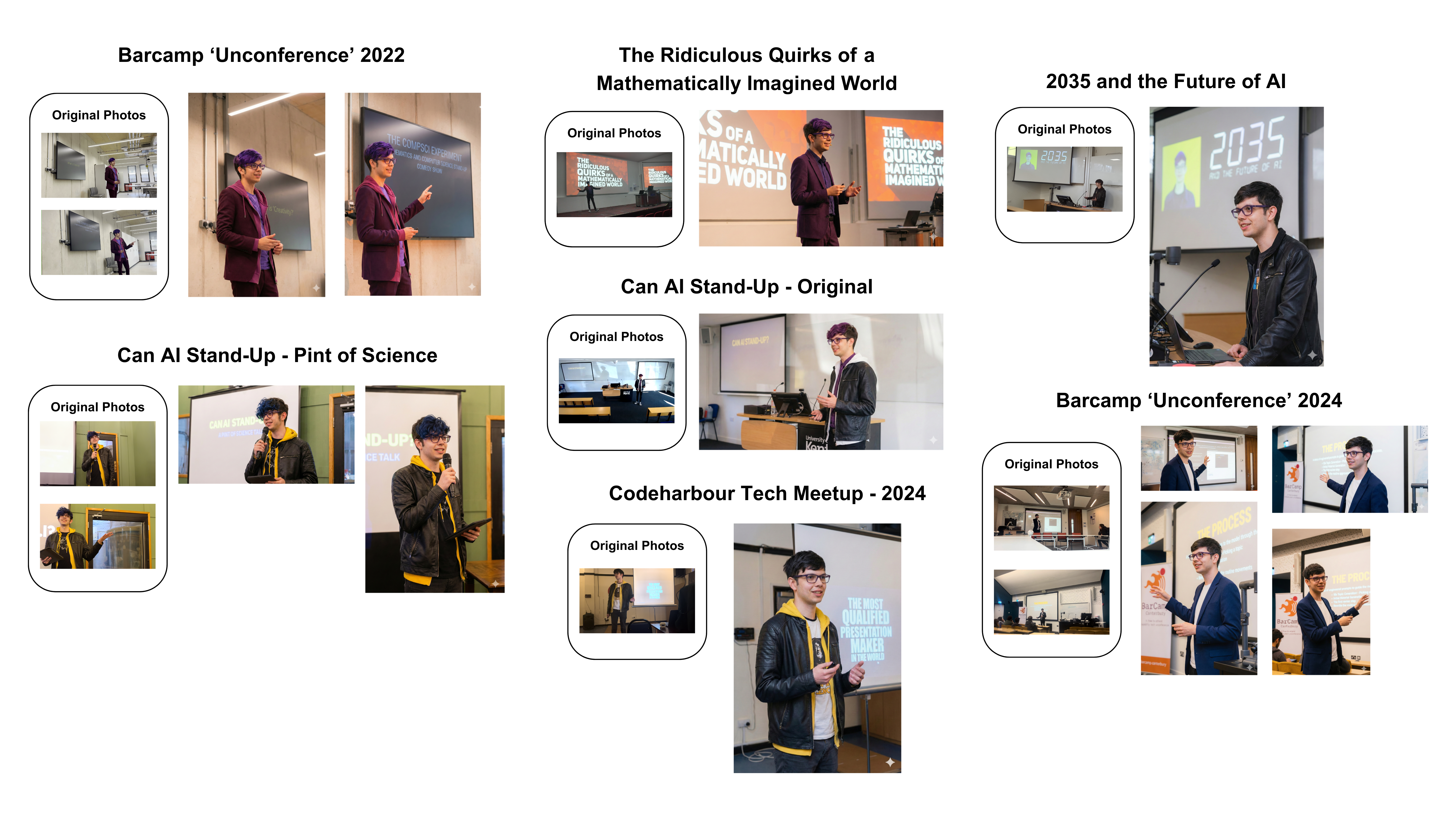

This post does not feature a single photo of me.

Introduction

The other day, I saw a sponsored post on Instagram that showed how we can use Google Gemini’s features to upscale and improve the quality of our pictures. The example in the video was of a concert performance, but I wondered (in a moment of nostalgia) if I could use this on old pictures of me performing.

But I wanted to push it further. Could I use Gemini’s image generation capabilities to reframe my pictures? I wanted to see if the model can act as an “in-the-moment” photographer for events that happened months or years ago.

The Process

To generate these photos, I supplied the model with a bad quality photo from a performance (usually a screenshot of the video recording) and a clear professional photo of myself to help it along. It’s important that the model understands what I look like.

Here is a sample of the Prompt that I provided to Gemini. I tried to keep it as simple as possible to be reusable for all the photos provided, whilst clearly steering the model in the right direction towards my desired output.

I've also given you a picture of what I look like.

Please can you improve the quality of the image of me performing and turn it into a close up mid shot in high resolution, while accurately representing the original picture. You can improve the lighting and make me smile slightly while talking. Make sure the presentation slides behind me are still visible. Please make my hair styled nicely.

The outfit should remain the same as the original performance, and the [variant] hair colour. Make sure you also include the dark purple glasses from the original picture of the performance.

The Results

The answer? Yes it could… but I found the results were bizarre.

I made a few observations:

- Context Retention: The model excelled at backgrounds. Presentation slides translated exceptionally well, preserving the actual text and context of the lecture. I was impressed by this as AI struggles with text in most cases.

- Perspective Integration: Scale was handled effectively. Even when the model moved my position in the frame, I looked like I naturally fit into the scene.

- The UncannyValley: While the generated figures clearly look like me, they feel slightly "off." It captured the likeness but you can tell its not quite a genuine photo.

- The Reframing: The model shined at changing the angles of the shots without losing the context of the original scenes.

A Warning

However, I also made a blatant error in my experiment. My reference photo did not have glasses, so instead I prompted the model to add them. This resulted in the glasses changing shape and style from photo to photo, an inconsistency that breaks the immersion for anyone who knows I wear the same pair for every show!

Conclusion

This experiment really shows the potential of AI to recontextualise important moments. Not just upscaling but also reframing. Through my own mistake, it also highlights the need for precise and accurate reference data. It shows how far we have come in image generation capabilities.